A Cognitive Computing Continuum only becomes truly cognitive when it can do more than connect distributed resources. It must also be able to anticipate, detect, learn, and act on what is happening across the infrastructure. This is exactly the role of ENACT’s Cognitive Services, which form the intelligence layer of the platform. These services combine predictive AI, anomaly detection, model training capabilities, and AI-driven orchestration to help the continuum respond more intelligently to changing conditions.

At a high level, ENACT’s Cognitive Services can be understood as a set of complementary mechanisms working together. On one side, the platform includes the AI Forecaster, the Anomaly Detection function, and the Virtual Training Environment (VTE). On the other, it includes the first version of the AI-Orchestrator, which combines Deep Reinforcement Learning (DRL) and Graph Neural Networks (GNNs) to support dynamic workload placement. Together, these components aim to make the compute continuum not just distributed, but also predictive, adaptive, and increasingly autonomous.

The AI Forecaster is one of the central building blocks in this layer. Its purpose is to predict future system-level metrics such as CPU usage, memory load, latency, energy consumption, and other performance indicators. This is important because orchestration decisions become far more effective when they are not based only on the current state of the system, but also on its likely near-future behavior. The Forecaster is structured around three functional modules: a Data Analysis Module for preprocessing telemetry and contextual data, an AI Forecasting Module for generating the predictions, and a Data Exchange Module for interfacing with other ENACT services through REST APIs and WebSockets. The design also anticipates continuous retraining, so the component can adjust as the environment evolves.

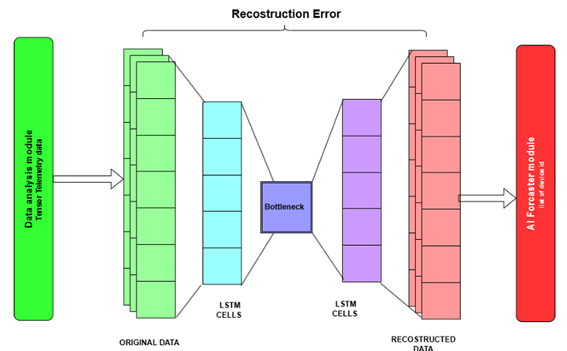

Closely linked to the Forecaster is the Anomaly Detection function. While forecasting looks ahead, anomaly detection focuses on identifying behaviors that deviate from what the platform considers normal. In ENACT, this function is integrated into the broader AI Forecaster logic and relies on LSTM-based autoencoders to detect potentially problematic situations in infrastructure behavior. This is especially relevant for a continuum environment, where failures, performance drops, or unusual patterns may emerge across heterogeneous nodes and services. By detecting anomalies early, ENACT strengthens the robustness of the platform and gives higher-level components the opportunity to react before a problem escalates.

Figure 2 – Anomaly detection architecture.

Another major element is the Virtual Training Environment (VTE). This component is particularly important because it turns cognitive services into something more manageable and reusable. Rather than treating AI development as an opaque backend activity, ENACT provides a user-oriented workbench for the training, retraining, execution, and management of AI models. The VTE includes a modular architecture with a Model Manager, an Execution Engine, support for Federated Learning, and interfaces for integrating custom or externally trained models. It also incorporates Explainable AI (XAI) capabilities, such as SHAP charts, to help users understand why a model is making a certain prediction. This is a strong feature, because trust and transparency are essential if AI is going to guide orchestration decisions in complex environments.

On the other hand, the AI-Orchestrator brings intelligence into action. This component is the execution layer that receives AI-generated deployment plans and applies them across the cloud–edge-fog continuum. Its core innovation lies in combining DRL with GNNs. The GNN captures the graph-structured relationships between tasks and devices, while the DRL agent learns how to choose placements that optimize outcomes such as latency, energy consumption, and resource use. In this way, orchestration moves beyond static rules or heuristics and becomes a form of adaptive decision-making grounded in learned behavior.

What makes these Cognitive Services especially meaningful is that they are not isolated research elements. They are clearly designed to interact with the wider ENACT platform: the Data Layer provides telemetry and training data, the DGM offers graph-based representations of the continuum, the SDK/APM supports integration, and the Orchestrator enforces the resulting decisions. This gives ENACT a coherent intelligence loop in which the platform can observe the environment, learn from it, generate insights, and translate those insights into deployment actions.

Therefore, the Cognitive Services show how ENACT is moving beyond conventional infrastructure management. Instead of relying only on static orchestration and manual monitoring, the project is building a continuum that can progressively forecast demand, detect abnormal behavior, train and refine its own models, and optimize workload distribution with AI-based reasoning. That combination is what gives substance to ENACT’s cognitive ambition and makes the platform far more than a simple distributed computing framework.